9 Conclusion

9.1 What is Possible Now that Wasn’t Before?

In the current state of the art, machine workflows are computationally feed-forward: we set parameters in CAM tools, they are compiled into GCodes, which the machine follows to the letter. Neither the CAM, nor the machine, have formalized knowledge of machine or process physics. This means that machine users must rely on tacit knowledge, intuition, or someone else’s pre-determined settings to successfully use machines.

In this thesis I modified these workflows by adding models of key machine components: their motors, their kinematics, and their processes. Using models, we can build workflows that are fundamentally different in the state of the art. Rather than feeding parameters forwards directly at a low level, we can describe optimal outcomes for our tasks, apply heuristics at a high level, and use models and model-based control to mediate between real-time physics and our targets. This amounts - partially - to machine systems that “know what they are doing,” in that they use simulations of real world physics to choose their actions.

Models enable not just better machine control, but can help us to design machines by showing us hypothetical performance under different parameters. They can also help us to better design machine toolpaths by simulating those virtually before we test them in the real world.

Machines are heterogeneous: many types and models exist, and even individual instances of the same hardware will vary (and vary over time) in subtle ways. That is why a big focus in this thesis was to use the machines themselves to build models. This not only simplifies the alignment of controller expecations to reality, it also enables us to detect changes to machinery over time, watch for errors, and improve models over time.

None of this would have been possible with GCode based machine controllers. The work in Chapter 2, Section 3.2, Chapter 4 and Section 3.3, each of which works to replace some aspect of the firmwares and controllers that run underneath GCode (and some of the layers above it) was a critical prerequisite. With those contributions, I also showed that we can develop this new family of controllers with a reuseable set of hardware and software modules rather than re-writing firmware or re-designing circuits for each new process.

9.2 Feedback Systems Assembly

I want to return to an observation that I made in the introduction, which is that GCode-based workflows are akin to compilers in computing; they rely on the regularization of the low levels of a system (and heuristics about those systems) to function, and they are fundamentally feed-forward. Figure 1.3 and Figure 1.4 are the best view of this comparison.

Along those lines, I want to try to articulate how the two halves of this document - one on systems integration tools (Chapter 2, Chapter 3, and Chapter 4) and one on workflows and controls (Chapter 5, Chapter 6, and Chapter 7) - are really sides of the same thesis on the use of feedback during systems assembly.

- This has to do with alignment, and whether or not it is automated, within the sub-components in our systems.

- When we build systems of any complexity, we need to use abstractions - interfaces - between components.

- Those abstractions also serve a primary role between the individuals who develop systems: machines, computers, etc. This is well articulated by (Baldwin and Clark 2000), who describe the miraculous development of the modern computer as a system of modules. See i.e. a design system matrix for a visual representation of the framing.

- This shows up in the assembly of computing systems that span multiple devices, where we must ensure that the two participating computers share a representation of what will happen, for example, when a message is transmitted from one to the other: what data will return, how will it be formatted, etc. This is a real problem for GCode-interpreters, because GCode authors must understand exactly what subprogram will run when they send an obscure

G28(for example): they, and their controller, must have a shared understanding of this meaning. Not helping is the fact that te message itself is semantically meaningless. This setup also makes the modification of GCode interpreters difficult, as I explain in Appendix A. - Compilers themselves, in the context of embedded devices also lack information about i.e. how the peripherals in their target microcontrollers work: they simply write to memory. Nor do they “know” or have any heuristics for the hardware (circuit board) that the microcontroller is soldered into. We have to write software for those layers, and ideally that software articulates what the device can do to systems integrators.

- OSAP and PIPES are explicitly setup to automate parts of that process:

- OSAP discovers network configurations, meaning that we don’t have to align our expectation of a network (which we might not easily know, or which might change) with the real system. This is as simple as not having to manually select a

COMport when we talk to an embedded device. - PIPES discovers program configurations over those systems.

- OSAP discovers network configurations, meaning that we don’t have to align our expectation of a network (which we might not easily know, or which might change) with the real system. This is as simple as not having to manually select a

- This also shows up in the assembly of physical systems, as I have already discussed explicitly: models are the interfaces that we can use as interfaces such that i.e. planners can anticipate the material and machine physics that they are writing parameters for 8.2.

Which is all to say that the model which GCode was based on - the compiler - is ill-suited to the task that GCode is meant for. We need feedback, not just for the use of these systems, but for their assembly and integration. We also need them for the humans who participate in their collaborative development, as I discussed in Section 8.3.1.

9.3 Closing Note

Like many people who are exposed to it, I always found digital fabrication exciting for the possibilities it opened up as a craftsperson and engineer. I fell down the rabbit hole when I started trying to build my own machines, which I did mostly because my time was free, I didn’t have any money, and I wanted to have machines of my own.

I started the research arc that led to this thesis because every time I tried to modify these machines - to repurpose 3D Printer firmware to make a five axis milling machine, or to use a machine interactively - I was stymied by obscure systems with strange representations, running firmware whose core principles were not explained anywhere.

When I came to MIT I found that even here the nuts and bolts of what makes a machine tick are not very well understood by the people who use them. However, almost everyone who spends much time with them comes to see the same frustrations. As Ilan Moyer would say, they do not feel like tools, but it seems like the aught to.

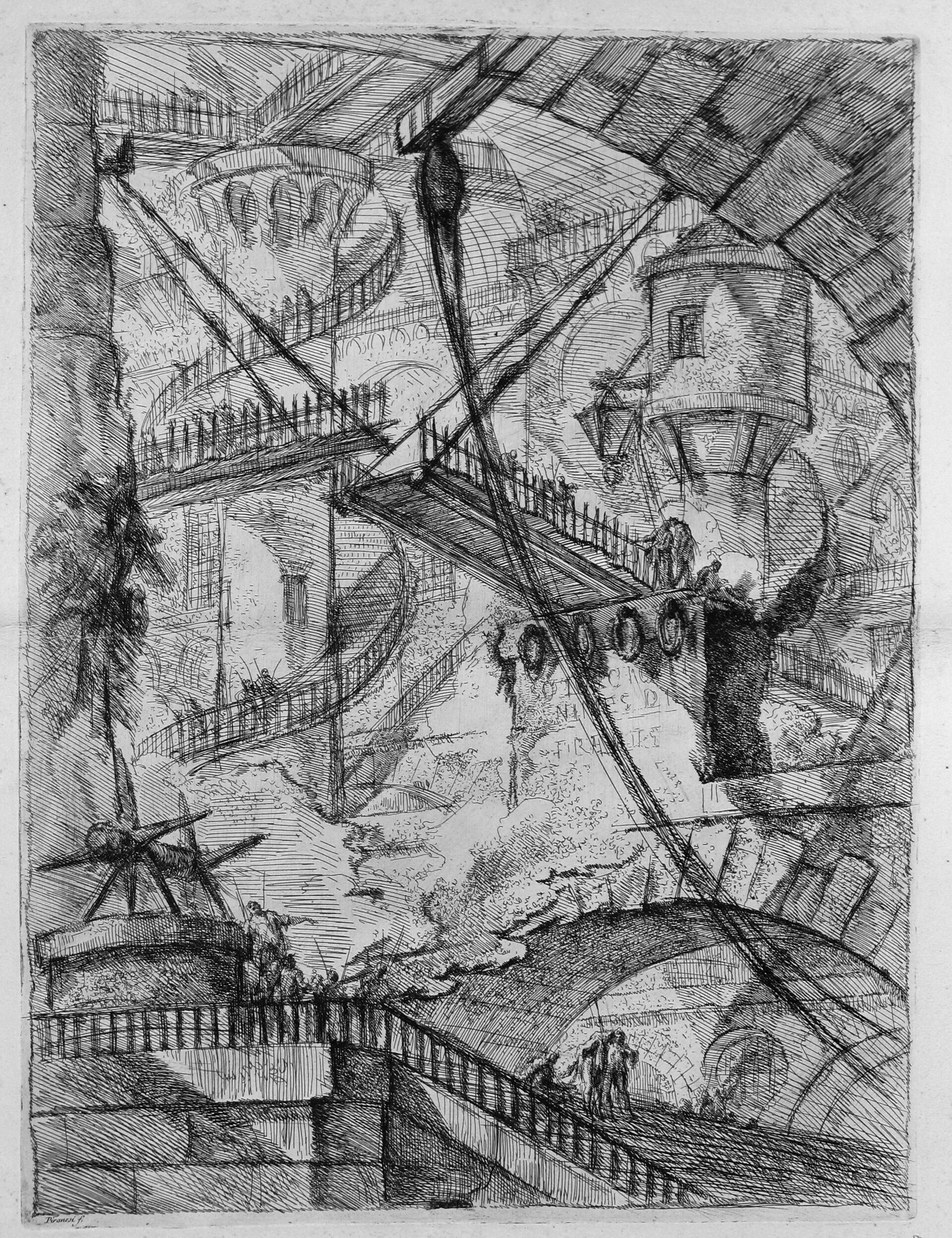

At the dawn of the first industrial revolution in 1745 Piranesi etched his Imaginary Prisons, one of which is above (Piranesi (1745)), depicting a dark future where humans are indentured to machines and systems of our own making. The future for digital fabrication is not so dire, but we seem to have failed in building machine systems that work for humans: they should be teaching us, and should enable us to willfully modify them. In their current configurations, machines for digital fabrication require serious engagement with occult knowledge. There has been excellent progress on this front recently towards consumer-facing equipment (mostly 3D printers), but we have yet to make the development of new automation straightforward. This seems urgent at a time when our economic, demographic and environmental concerns will require us to build lots of tools very quickly.

In this thesis, I tried overall to contribute where I could to make machine operation and development make more sense, revealing that of that occult knowledge is actually common place: everyday programming design patterns and physics that we each have some lived intuition for.

In the end, the thesis is named after GCode because that is the layer in the state of the art that separates real world physics “as it happens” from our intuitions of those physics “as they might happen.” That layer disconnects our actions from their consequences. Manual machinists often lament that they cannot feel what a CNC machine is doing. As it turns out, neither can the machines. In developing machine controllers that can feed back physical readings as they operate, and that use real physical models to do so, I showed that we can develop workflows that formalize our physical understandings, so that we can better make sense of the machinery that we use.