1 Introduction

1.1 Motivation

Machine building is a core competency for an industrial society, and is becoming increasingly democratized. For decades, manufacturers have used digital fabrication1 to build products in large and small volumes. More recently even small engineering firms are using machines to prototype designs in house, build automation equipment and tooling, and to perform process-specific tasks and tests. Alongside these engineers we find scientists, (2024) (2023), STEM educators (2012) (2013) (2020), craftspeople (2022), and autodidacts who all use (and develop) machines to learn - about our world, about maths and artistry, and about their ability to change the world while participating in our material economy.

It is not surprising that engineers might develop and deploy machinery, but our scientists, educators etc belong to groups whose main concern is not the machines themselves - these people’s heads are already filled with other (important!) knowledge. Understanding their tools should not require that they give up an exceptional amount of that valuable headroom.

Even large manufacturing firms need their machines to be easier to use: some half a million manufacturing jobs were unfilled in the USA in 2025 (NPR Planet Money 2025) (Bureau of Labor Statistics 2025) and thirty thousand in Canada (Statistics Canada 2025)2, largely due to a gap in the availability of skilled workers. These valuable jobs (Labrunie, Lopez-Gomez, and Minshall 2025) often involve the operation and use of digital fabrication machines. Aspirationally, they involve machine development as well as use: MIT studies have found that factory workers were hopeful about automation that allows them to do more complex tasks (Armstrong et al. 2024), and that flexible manufacturing (which involves rapid re-tooling of equipment) is of key importance if we want to bring manufacturing back to the west, where automation has been poorly adopted (Armstrong and Shah 2023) (Berger and Armstrong 2022).

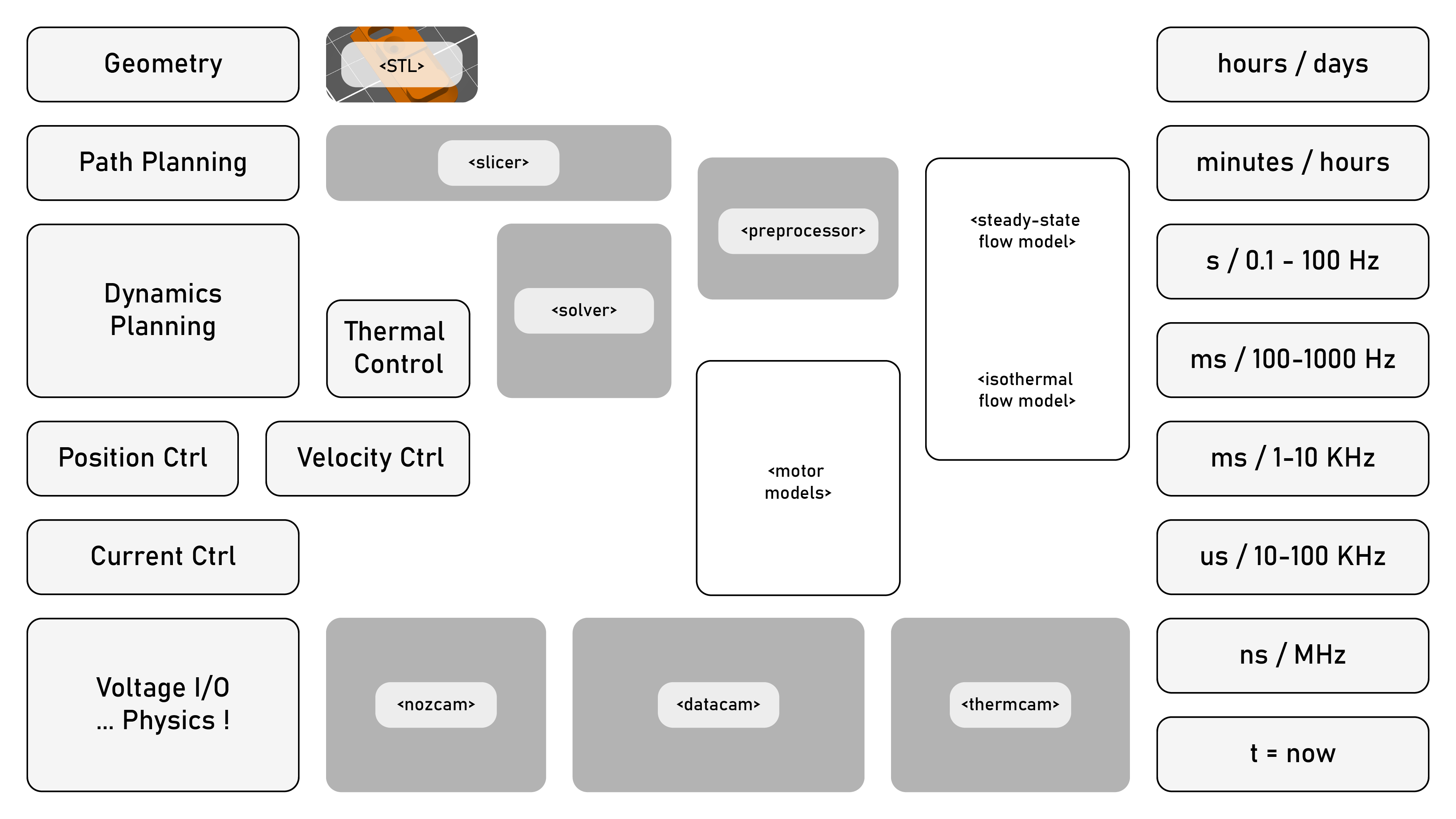

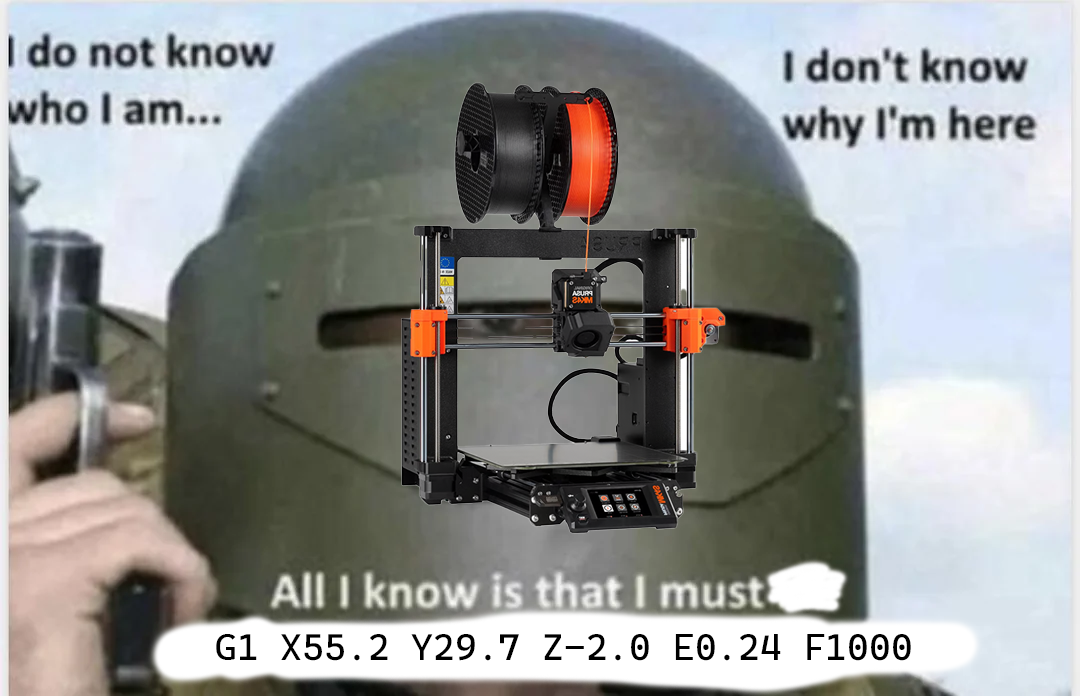

This motivates the central question in this thesis: how can we make the development and use of machines simpler? In particular, I am interested in replacing a pervasive and opaque representation of machine control (GCode) with a flexible and interpretable systems architecture that can be modifed and operating using commonplace programming design patterns, and that enables the development of intelligent machine controllers and feedback-based workflows.

Those workflows and controllers revolve around the notion of constrained optimization. It is straightforward to see how this framing explains machine control: we have optimal outcomes (making our parts: precisely, quickly), and constraints (which emerge from machine and material physics: we cannot cut with infinite force or move at unlimited speeds). In the state of the art, many of these constrains are implicit and require that we carefully author our GCodes such that they are not violated, meaning that the use and design of machines requires a lot of tacit knowledge that can take years to develop. So, can we frame this constrained optimization problem more explicitly? In our real-time controllers, as numerical solvers for optimization, and in our workflows, reframing them so that parameter tuning is available within constraints, but overall operation is automatically bounded by those constraints?

Besides machine users, this thesis also aims to provide value for folks for whom machine design and control is the main activity. Tooling for machine builders is notoriously proprietary - even large firms like Fanuc, Haas or Robodrill contract the controls for their machine to other firms like Siemens and Heidenhain, whose algorithms are kept close to the chest (2020). This is despite the fact that almost every machine, before it can become excellent, must first master the simple task of moving around well - quickly, precisely, efficiently, and smoothly. If state-of-the-art design patterns for these core competencies were more broadly understood and easier to implement and extend, we may be able to help advance innovation worldwide.

Machine control is a task that I and many of my peers have found to be surprisingly nuanced; it runs us through a gauntlet of topics from power electronics and current control through time, space and frequency domains and into model predictive control, dynamic programming and nonlinear optimization. Mostly, people wade into these problems after having designed some hardware that they want to control - only to find the task riddled with “gotchas” and “traps for young players.”3

Besides my own colleagues and friends who I have worked with on machine building, I have been able to meet many small (and large) companies who are developing new machines i.e. for composite fiber layup, novel additive manufacturing processes, hydraulic valve tuning, medical device manufacturing, etc. Each of them has had to rebuild a controls stack internally, especially in cases where motion controllers must be tightly coupled to process controllers. If nothing else, I hope that this thesis can serve as a guide through these tasks, towards a world where building machines is more like building software - i.e. where we can find very good solutions to common problems available in the commons, and easily yoke them to our particular applications.

1.2 Background

1.2.1 A Small GCode Primer

Understanding how GCode works, what niche it fills, why we might want to replace it (and why it is so pervasive) is important background for this thesis. To that end, let’s look at a basic GCode “program4” that, for example, cuts a square out of a piece of stock using a router (a subtractive tool).

L01 G21 ; use millimeters

L02 G28 ; run the homing routine

L03 G92 X110 Y120 Z30 ; set current position to (110, 120, 30)

L04

L05 G0 X10 Y10 Z10 F6000 ; "rapid" in *units per minute*

L06

L07 M3 S5000 ; turn the spindle on, at 5000 RPM

L08

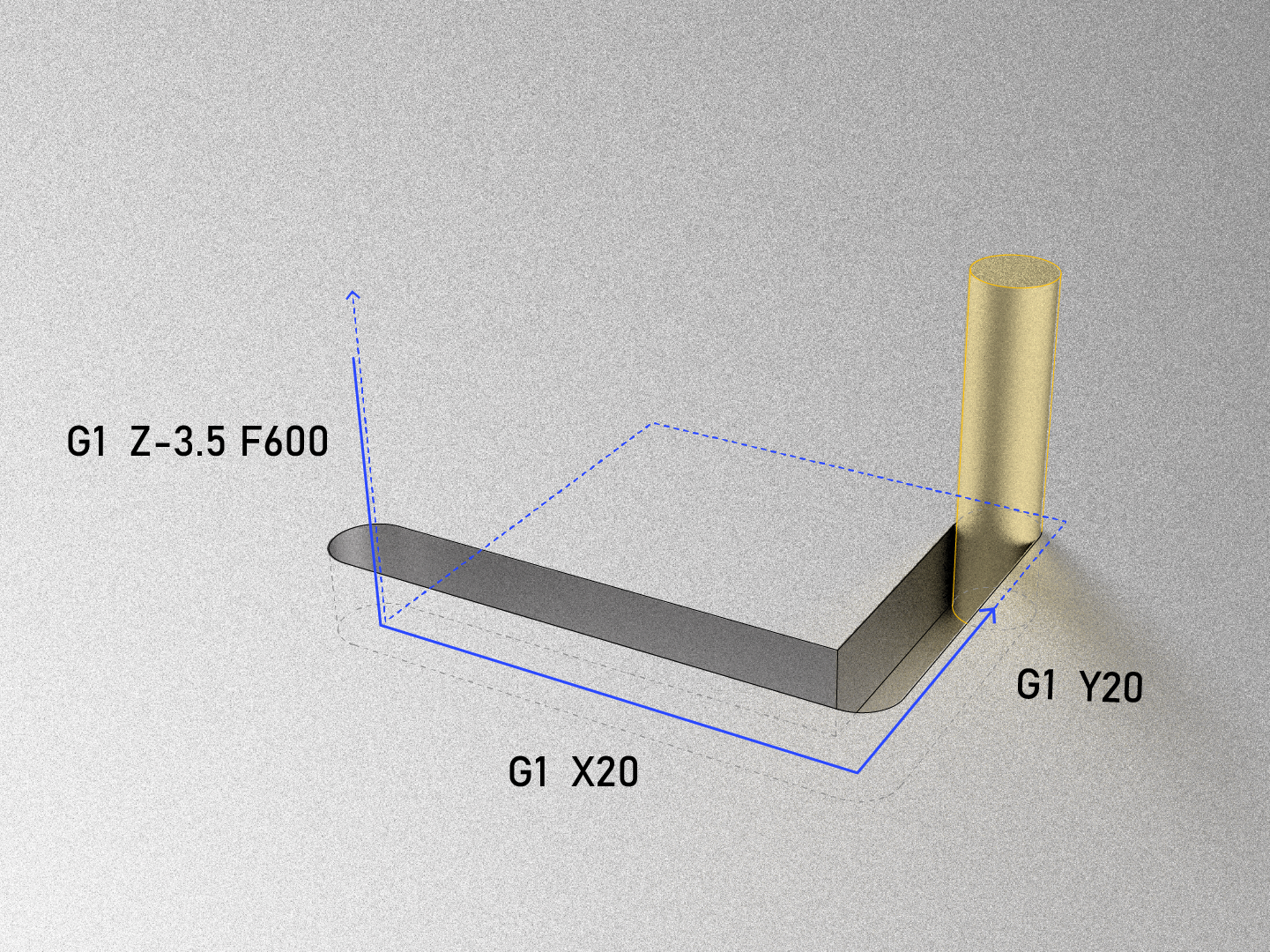

L09 G1 Z-3.5 F600 ; plunge from (10, 10, 10) to (10, 10, -3.5)

L10 G1 X20 ; draw a square, go to the right,

L11 G1 Y20 ; go backwards 10mm

L12 G1 X10 ; go to the left 10mm

L13 G1 Y10 ; go forwards 10mm

L14 G1 Z10 ; go up to Z10, exiting the material

L15

L16 M5 ; stop the spindle

L17 G0 X110 Y120 Z30 ; return to the position after homing (at 6000)

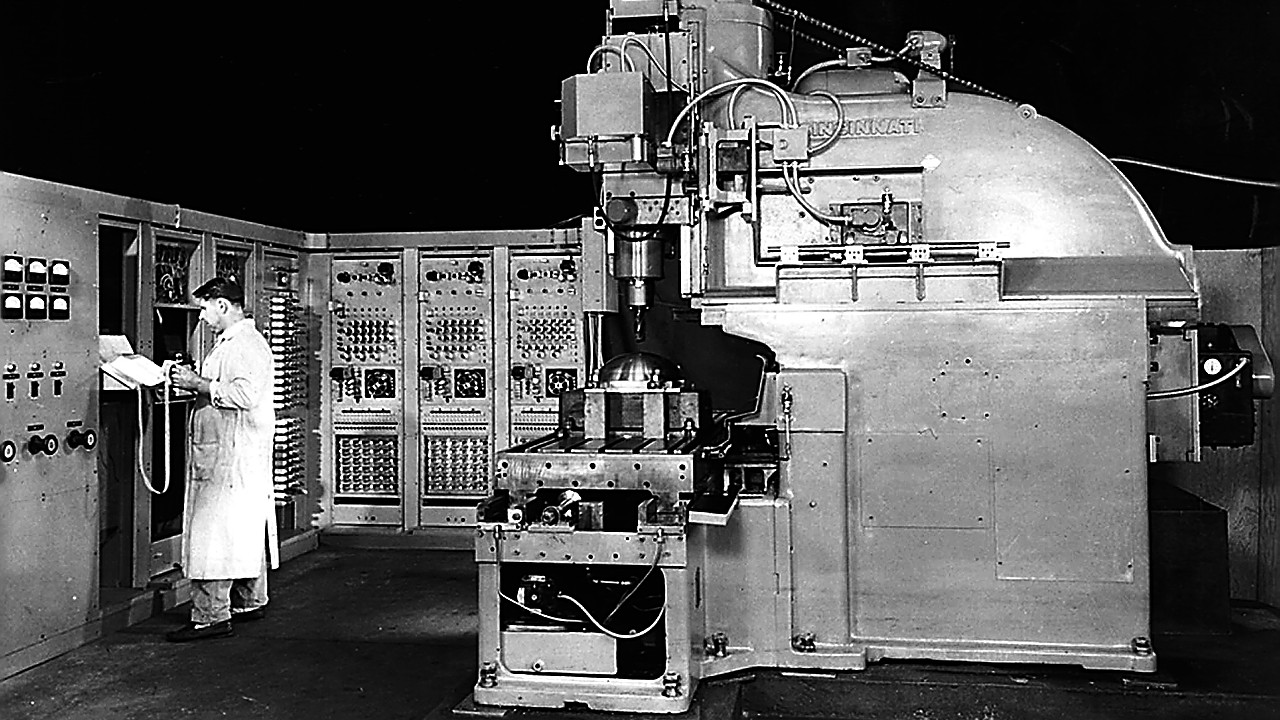

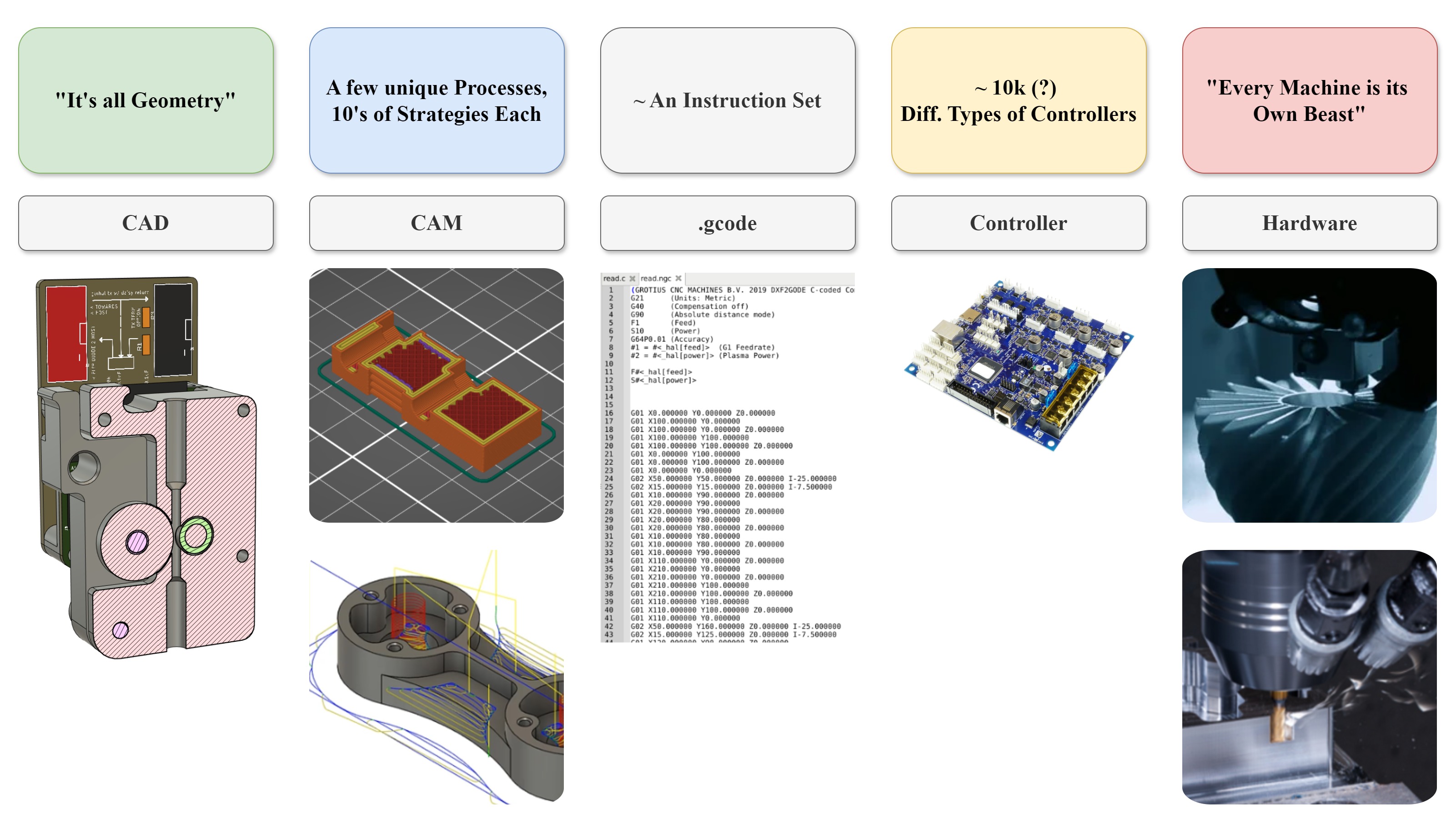

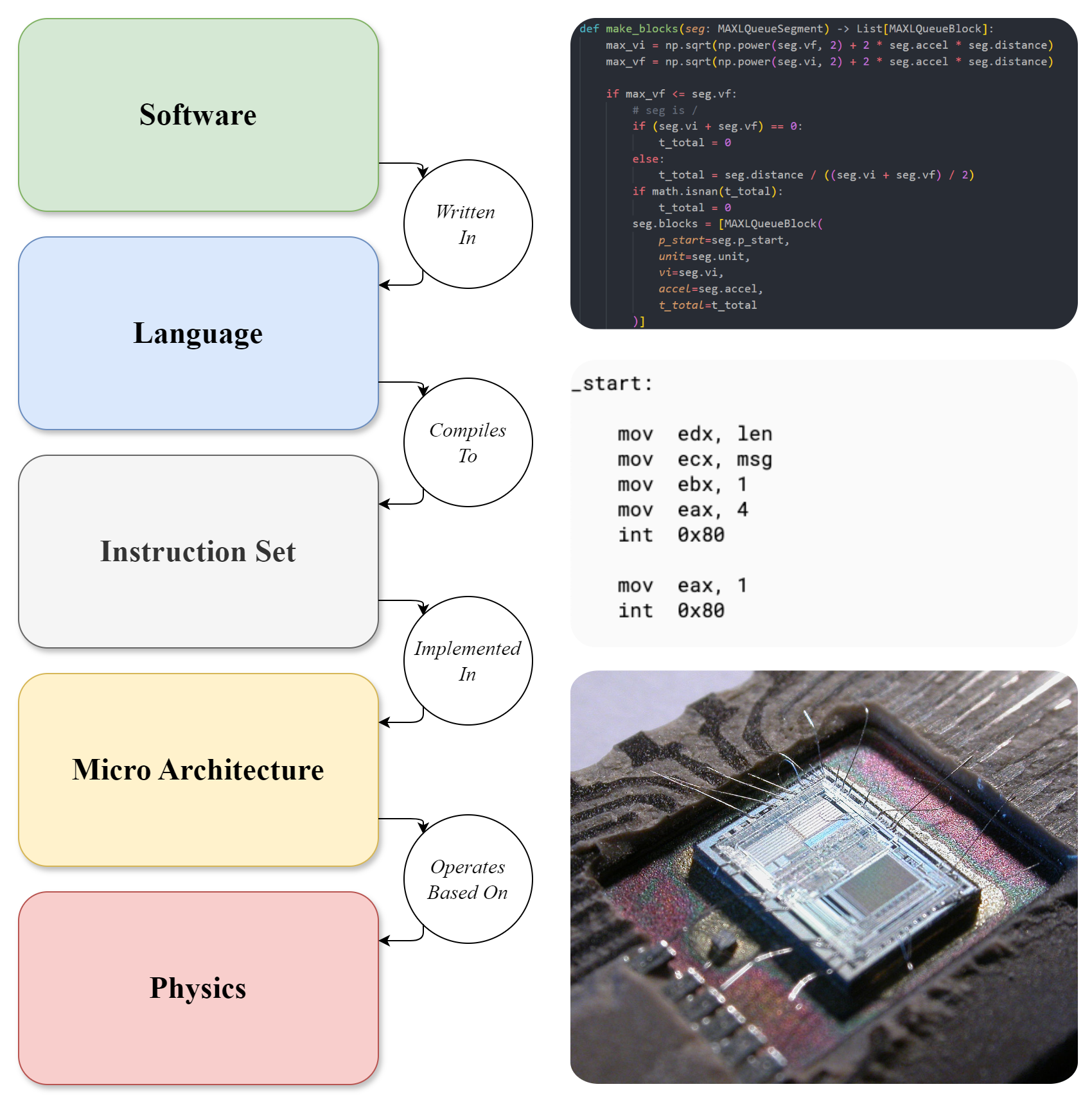

GCode was developed around the same time as programming languages, compilers, and instruction sets: in computer science we call these “layers of abstraction” and they help us to build complex things without worrying about the particulars. Programming languages allow software engineers to write algorithms without manually writing out machine instructions (Parnas 1972), and compilers rely on static instruction set architectures (ISAs) to generate low-level codes without concern for how they are actually implemented in hardware (see Figure 1.4). GCode was meant to take the same place in machines, allowing machine programmers (formerly machinists) to develop manufacturing routines that could be deployed on variable hardware (Noble 1984).

This probably seemed like a good idea at the time, but it is stymied by two important facts. Firstly, while there are only a few ISAs for compilers to contend with (and even then, a real homogeneity around x86, ARM and now RISC-V5 in practice), there are perhaps tens of thousands of unique GCode interpreters each with a unique “flavor” of GCode. I diagram this relationship in Figure 1.4. For example, in line 10 above, we tell the machine G1 X20 (move the X axis 20mm to the right (from its previous position established in L05)), but it is impossible to know from the code alone which motor (or combination of motors) is actually going to execute this move; there is a system configuration that is hidden from us that is unique to the individual machine. This seems like a simple problem (simply write down the configurations, right?) but is a major hurdle for companies like AutoDesk, whose CAM software (Computer Aided Manufacturing, see Section 1.2.2) must interface to many machines, and needs to know exactly how (for example) the X axis moves relative to the rest of the machine in order to simulate collisions and show the user what their program will do. This is even more difficult with advanced machines like mill/turn machines6 where most users result to programming jobs manually (i.e. bypassing CAM software) - a clear failure of what is meant to be a low-level layer.

Secondly, physical processes themselves are also heterogeneous: even if we run the same job on a machine thousands of time, each is bound to be different: cutting tools wear out, incoming stocks are of slightly different sizes and compositions, external factors like heat soak and ambient air temperature all change. Whereas computer science goes to great lengths to ensure the homogeneity of the lowest layers of its stack (the implementation of ISAs), it is simply not possible in manufacturing to do the same. All the while, GCode provides no recourse to use real-time information in the execution of manufacturing plans, i.e. it is entirely feed forward: if we want to describe an algorithm that controls a machine intelligently based on physical measurements, GCodes are simply not an option.

GCode also contains a mixture of simple instructions like G1 (goto position) alongside larger subprograms like G28, a homing routine that may involve coordination of many of the machine’s components. If we want to interrogate what G28 is actually going to do (i.e. to see which axis is going to move first), there is no way to inspect “one layer down” from the G28 that is blankly staring at us. Later on in Section 3.2, I articulate how the contributions in this thesis transform the opaque GCode above into an inspectable set of programming tools.

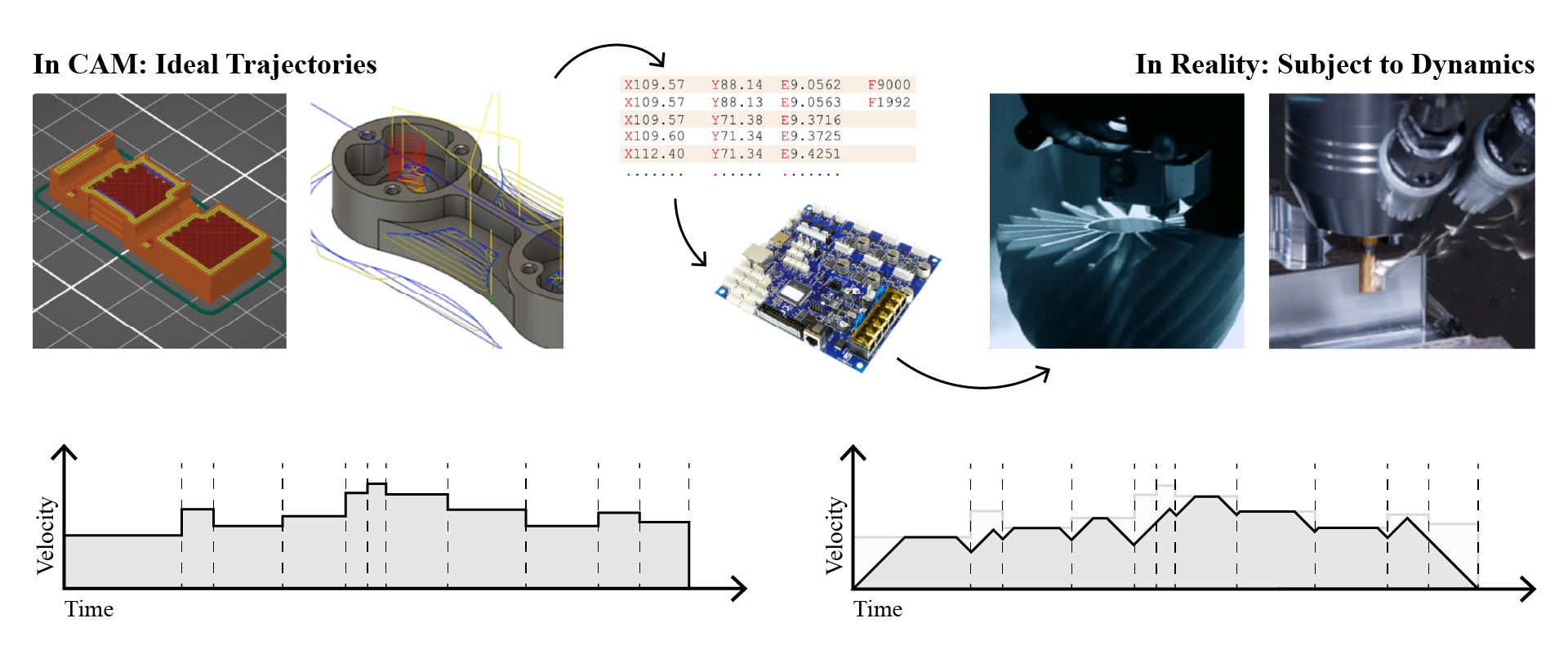

1.2.2 A Small CAM Primer

While GCodes can be written by hand, they are most often generated using CAM tools (for Computer Aided Manufacturing). When someone learns how to make things with digital fabrication, much of their effort is devoted to learning how to use CAM softwares. CNC Machining CAM is notoriously difficult, whereas 3D Printing CAM tools (known as slicers) are much easier (this is one of the main reasons that 3D Printing has become so ubiquitous). Each has its own quirks, but follow a similar pattern: a 3D file is imported and positioned in a virtual work volume, and then various parameters are configured such that the software can generate a path plan (which will become GCode) for the selected machine. Figure 6.1 and Figure 7.4 give examples of printing and milling parameter sets respectively. For a longer discussion of each, see Section 6.2 and Section 7.2.2.

For now the main issue to point out is that CAM tools are disconnected from the machines that they write instructions for. Again, see Figure 1.4. GCode does not afford a pipe that can send data from the machine into CAM. Neither are the parameters that users set in CAM related to physical models of the process: success in printing is related to filament flow and cooling, but in a slicer we tune layer height and print speed (which are only indirectly related). Machining tools are limited by stiffnesses, cutting forces and vibrations: in CAM tools we set feedrates and spindle speeds - again, indirectly related.

1.2.3 Machines are Thoughtless

So we come to the core of the problem in the state of the art, which is that digital fabrication equipment does not think about what it is doing, nor can it tell a user what it is capable of, anticipate errors that may arise from a particular program, or be easily re-configured to do a task that its original programmers did not imagine. This is essentially due to the disconnect between CAM tools, where users program their machines, and machine controllers, where instructions are carried out. When mismatches arise between CAM outputs and real conditions they simply fail. For all of its advance, most digital fabrication seems about as sophisticated as the injket printing we are all familiar with: sometimes straightforward and unsurprising, or else error prone and frustrating, but never enlightening.

For example, a CNC Milling Machine itself has no ‘knowledge’ of the physics of metal cutting, chip formation (see Figure 7.1) or structural resonances even though these physics govern how we might whittle a block of aluminum down into the desired shape. In the same way, a 3D printer knows nothing of the rheology involved in heating, squeezing and carefully depositing layers of plastics in order to incrementally build 3D parts (see Figure 6.2). It is difficult to say whether any computer system has ‘knowledge’ of anything; in this case I mean that our machines do not use any information about the material physics (and very little about their own physics) they are working with while they operate7.

In order to operate succesfully, machines need to have some understanding of these physics embedded into the low-level instructions they do receive, but those are developed in CAM tools that only encode them as heuristics and as abstract parameters that don’t have direct correlations to process physics. This poses a problem for machine builders (who need to carefully tune their motion systems) and machine users (who need to intuitively tune a slew of CAM parameters). It also means that many machines do not operate near their real world optimal limits (since hand-tuned parameters tend to leave considerable safety margins), leaving some performance on the table. Finally, it prevents us from learning from our machines: where the limits are, where we are operating with respect to those limits, and how we might best change our machines or our approaches in order to do better.

1.2.4 Machines are Poorly Represented

The technical root of this non-thinking issue is perhaps best articulated like this: machine controllers and users don’t have good representations of the machines that they are meant to operate. They don’t know what they are, let alone what they are doing. A slightly more precise way to say this is that machine representations are lossy - they are misaligned with reality, and difficult to correct.

I discussed one aspect of this problem already: when we issue a code to G1 X100 (move the x axis 100 units), we need our mental model of the machine (which axis is “X”) to align with the controller’s model (and wiring diagram). This level of the problem is just configuration. In the state of the art, this configuration is setup in the machine’s firmware8, which is difficult to inspect and edit. These “hidden states” cause all manner of headaches for educators, hobbyists, and scientists who want to re-purpose off-the-shelf controllers for their own inventions, especially if their machines deploy nonlinear or novel kinematics. One common result of this problem is to find machines in the wild whose controllers are convinced that they are a 3D Printer when in reality they are i.e. a gel extruder (Dávila et al. 2022) or a liquid handling robot (Mendez and Corthey 2022), or cases where authors have had to develop their own adhoc control systems (rather than re-using available designs) (Dettinger et al. 2022) (Florian 2020).

What a machine builder normally wants is a computational representation of their machine that is aligned with reality - this is half semantic alignment (any axis can be named “X” if we declare it to be, but our CAM and Controller should agree on the declaration) and half tuning and model fitting (how are the kinematics arranged, how much torque is available, and what happens if we apply some amount of it over the course of one second?). With a complete computational representation of our machines, we would be able to simulate and visualize them in software to learn what they might do given certain instructions. The danger in this approach is that the simulation is misaligned with reality - remember how no two machines are truly the same - and this is why this thesis focuses on using in situ instrumentation to build the models used to represent machines: i.e. we use the machine to build models of itself.

1.3 Key Questions and Contributions

By now we can tell that getting a machine to operate successfully requires machine users to implicitly understand process physics and machine physics, but also to understand how parameters will translate into real-world operation through a set of hidden control and configuration steps.

The broad goal of this thesis is to take these scattered, misaligned machine representations and collect them together into comprehensive and comprehendible computational representations (models and programs). Doing so will let us operate our machines more effectively, reduce the parameter space that requires trial-and-error tuning, help us to rapidly build new machines and teach us about those that already exist, and enable us to connect them to smarter control algorithms.

I have a set of guiding questions:

- What kind of systems architecture would enable us to develop feedback-based machine control workflows?

- What programming representation lets us easily use the same hardware both for model building, and for model based control?

- How can machine workflows and algorithms be described (without moving between formats or programming models) when they consist of both high- and low-level components?

- Rather than re-building controllers for each new machine and process, can we do this in a way that is modular and re-useable across the heterogeneity of machine processes?

- What might a “complete” feedback-based digital fabrication workflow look like, and how does it perform against the state-of-the-art?

- What types of models do we need in order to capture and represent machine physics?

- For their motors?

- For their kinematics?

- For their processes?

- Can we build those models using the machines themselves?

- If machine control is fundamentally a constrained optimization task, and that optimization is performed intuitively in the state of the art by machine operators, can we instead re-frame it as an explicit, numerically solved constrained optimization problem?

- Are these faster or otherwise better than state-of-the-art solutions, or are the gains only marginal?

- Models are sure to miss some aspects of machine control that remain easily understood intuitively. Model-based control risks turning well understood and productive processes into black-boxes, which would prohibit machine operators who already have a strong understanding of their hardware and processes from applying that knowledge and make it more difficult for newcomers to learn.

- How do we combine models with heuristics where they are still valuable?

- Can we present model and optimizer outputs in a manner that could be informative for machine users and designers?

- Finally, I have a similar question to the one posed above, but framed around machine design as a practice:

- How can models and optimizers help designers to evaluate their machines?

- How can models be used as tools in the machine design process?

Model-based control risks becoming a black box

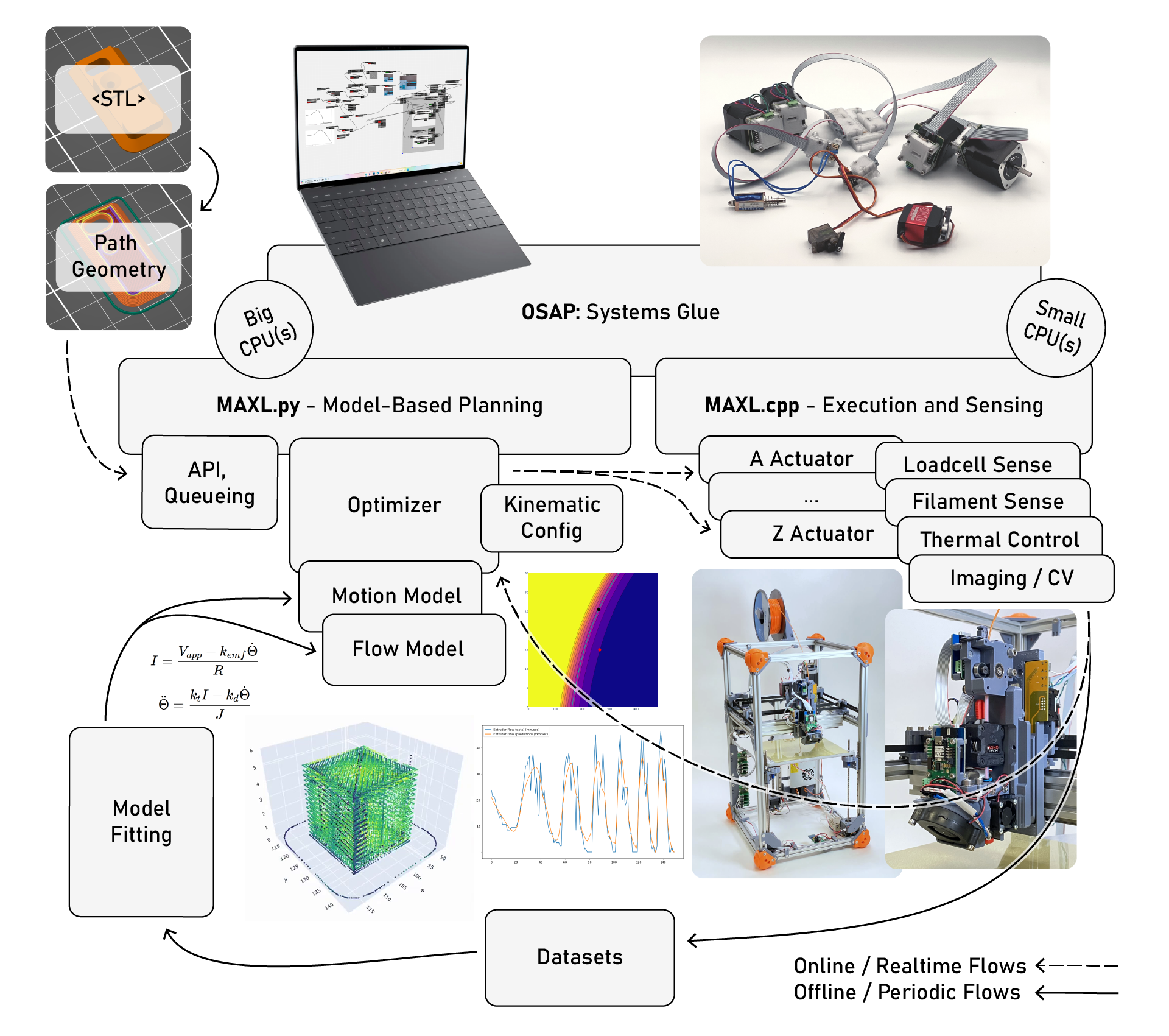

To answer the first question above, I develop a few core methods for the thesis:

- In Chapter 2, I build a networking architecture that connects high and low level software environments across heterogeneous links, allowing us to connect machine hardware to software. This has a few important properties:

- Connections are relatively low latency.

- Network configurations can be ascertained automatically, reducing setup burden on machine builders.

- Clocks in the network are automatically synchronized, providing a basis for co-ordinated control and sensing.

- Chapter 3 covers my progress on programming models (and libraries) for modular machine control.

- In Section 3.2, I develop PIPES, a programming interface that represents distributed systems using dataflow and remote procedure call semantics. This allows us to develop machine algorithms whose components are made up of firmware and software modules together.

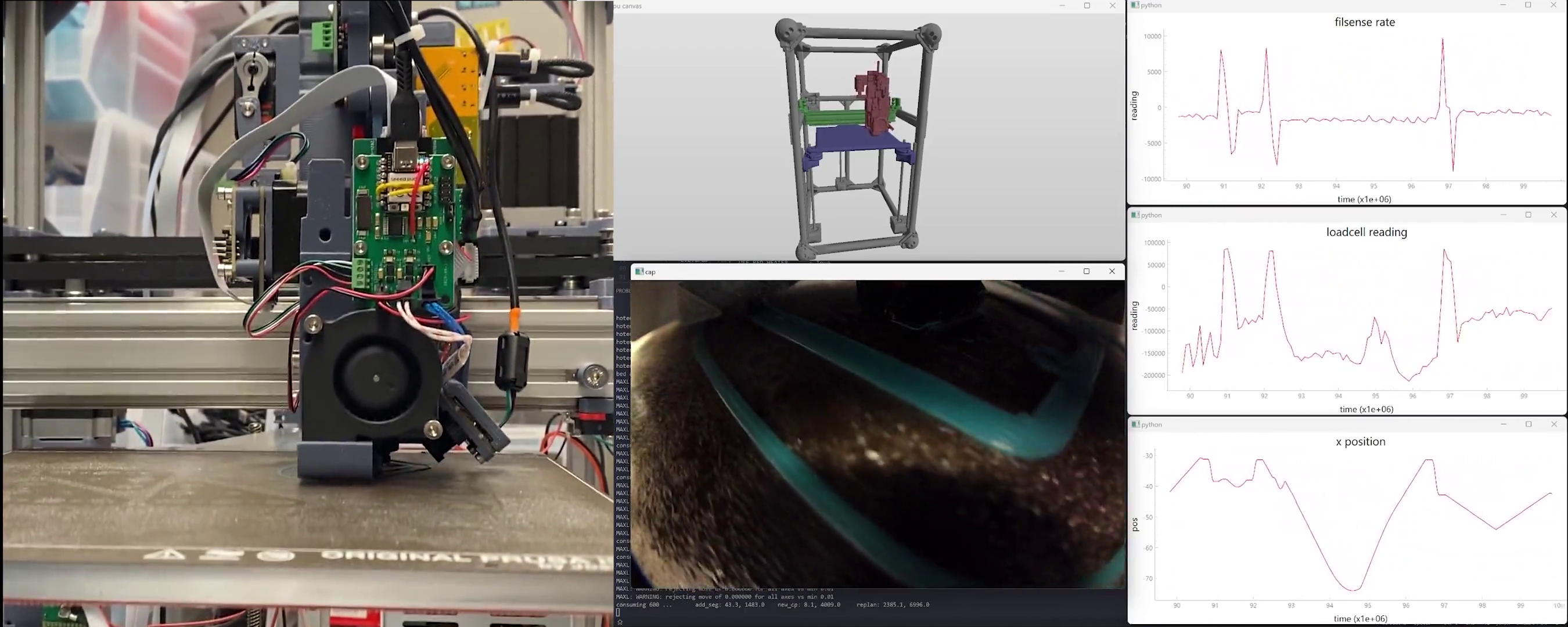

- Within the PIPES framework I develop MAXL (Section 3.3), a motion control framework for distributed but co-ordinated execution of machine controls.

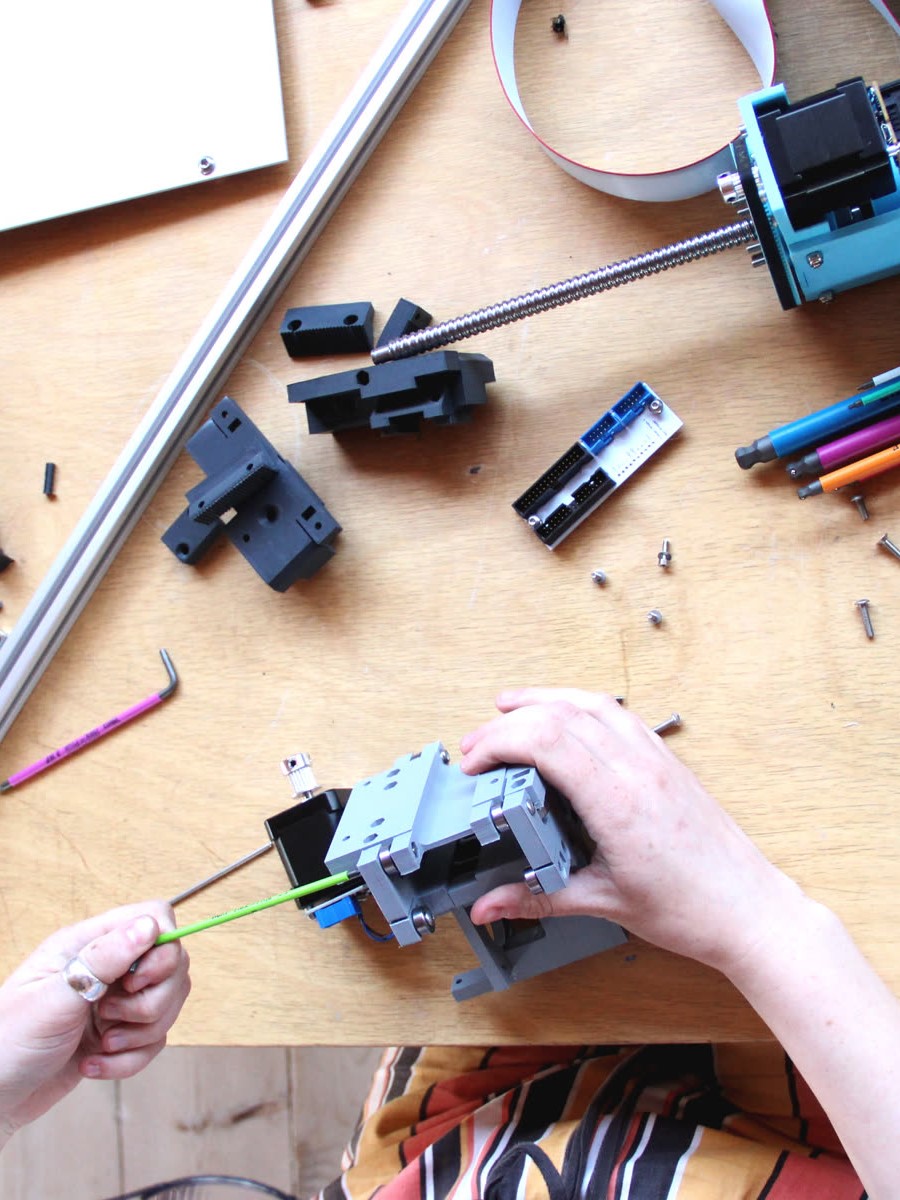

- In Chapter 4, I develop a kit of modular control boards for actuation and sensing of machines.

Using these tools, I can begin to answer the remaining questions:

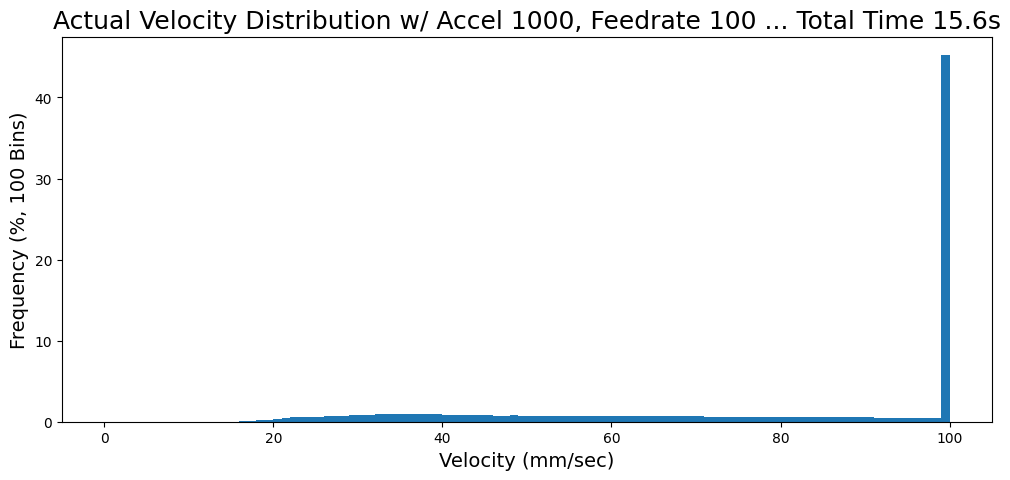

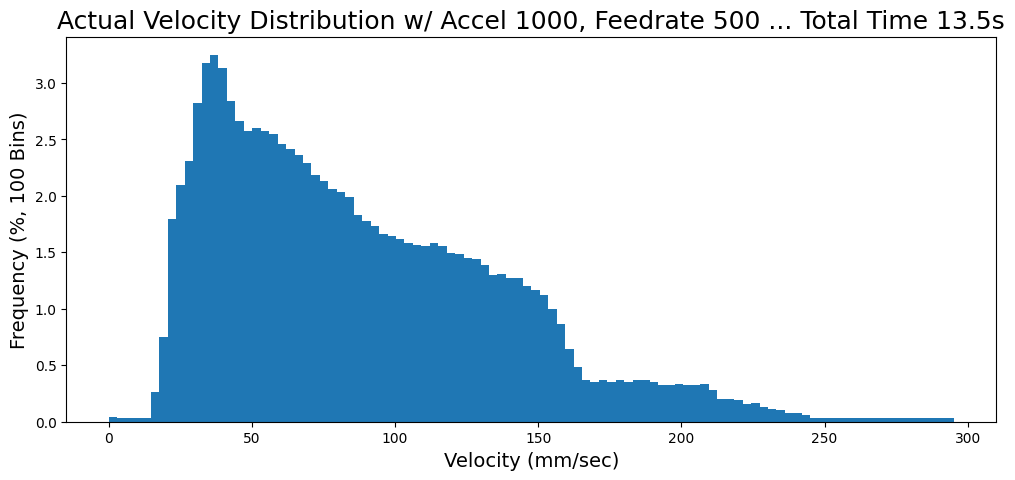

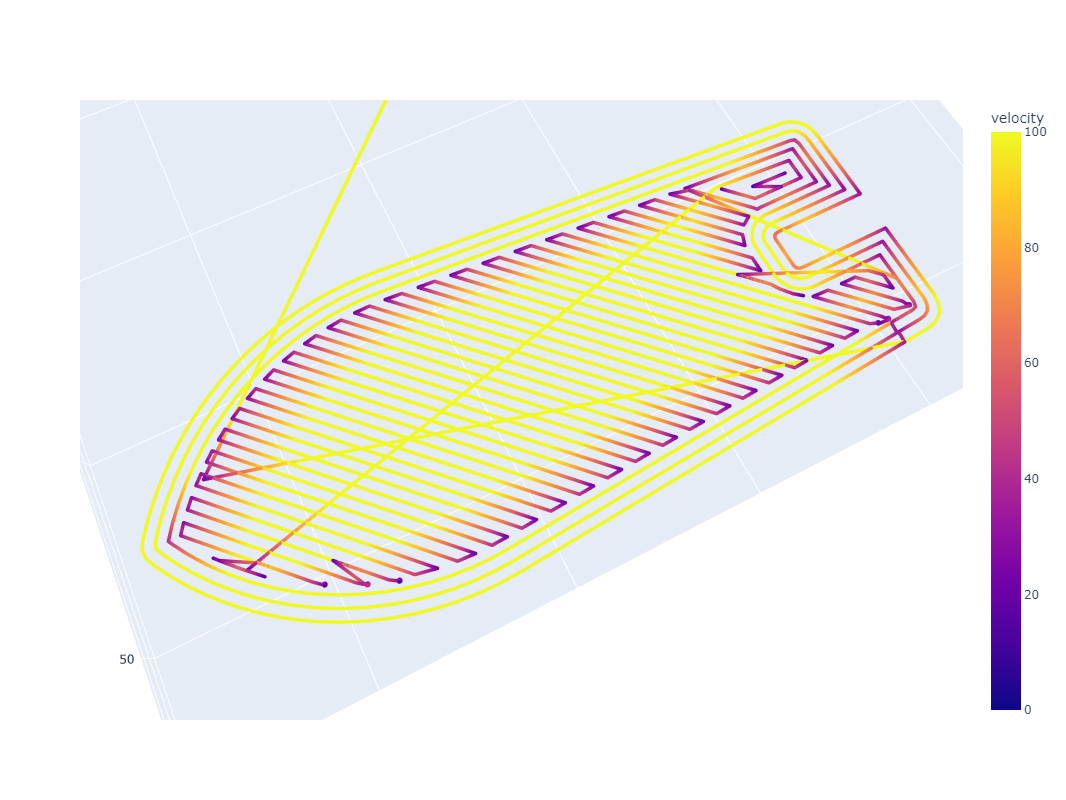

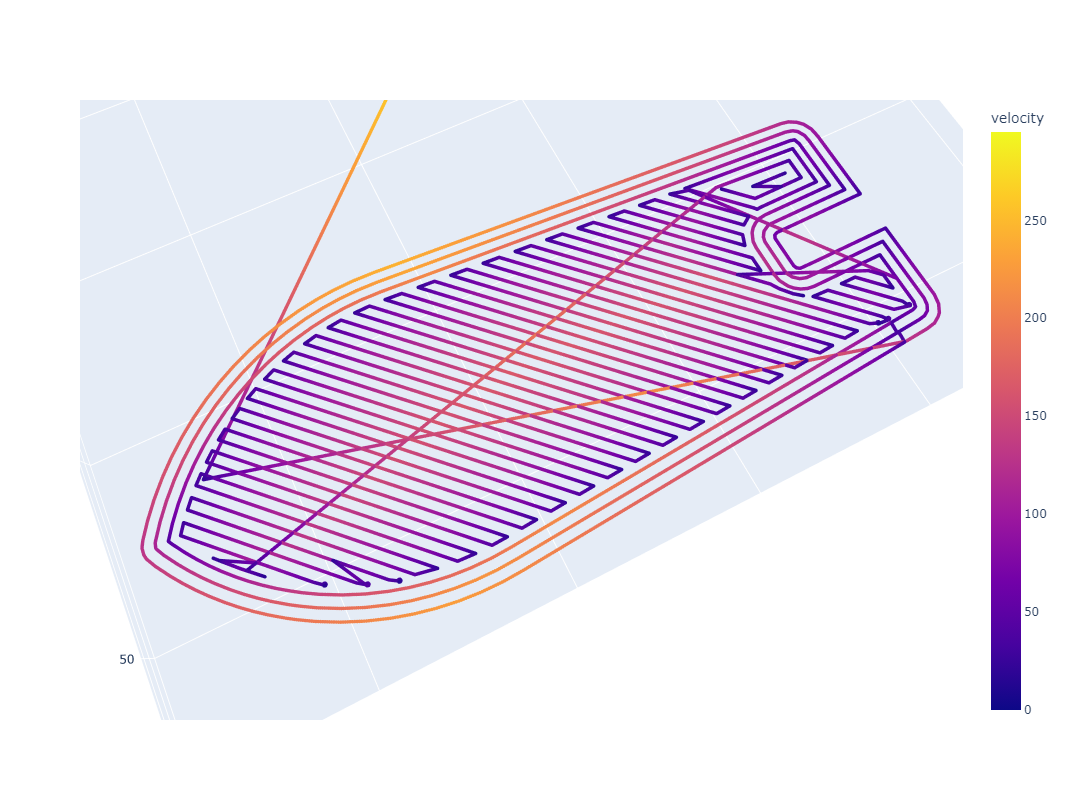

- In Chapter 5 I develop a velocity planner that expresses machine control as an explicit constrained optimization problem. Here, I show that model-based control can exceed the performance of heuristics based solvers that are common in the state of the art for the same task. This requires that I develop a few additional methods:

- A closed-loop stepper motor controller that can learn some of its own model parameters (Section 5.3.1).

- Models of machine mass, damping, and kinematics (Section 5.3.2).

- The solver itself, in Section 5.6.

- To examine explicit optimization across not just motion, but process control, I develop in Chapter 6 a 3D Printer that models polymer melt flows (Section 6.5). I extend the solver to include these physics in Section 6.9. This demonstrates a few things:

- Controlling machines via direct optimization against their real-world physical constraints is possible.

- Model-based control of machines can provide useful feedback to machine users and designers, in Section 6.11.2 and Section 6.11.3.

- In Section 6.8, I show how models and heuristics can be combined to complete a feedback-based digital fabrication workflow (Section 6.3). I evaluate this, and flow models, by printing a number of different materials with a number of different nozzles in Section 6.10.1.

- I compare these prints to a state of the art workflow, showing that it captures existing practice and (on the metric of speed) out-performs it.

- By articulating goals rather than outcomes, optimization based workflows allows us to productively explore wider swathes of parameter space.

- Combining heuristics with models allows us to extend those heuristics across a range of underlying physical constraints, reducing the total number of parameters that must be tuned in order to generate successful outcomes (Section 6.11.1).

- I (accidentally) show that model-based printing workflows can correct for human errors, i.e. mislabelling a machine component (Section 6.11.5).

- In Section 7.3 I develop model-based tools for machine designers for the purposes of motor selection and motion control parameter selection. In Section 7.5.2, I demonstrate the use of these tools (in combination with tacit knowledge) as I commission a CNC Milling machine.

- I show how even simple motion controllers, once exposed as software objects that can be used virtually, can provide valuable feedback and insight to machine users (Section 7.4.1), especially when coupled with machine models (Section 7.4.2).

- In all of these exercises, machines build their own models. I also use the same hardware and software architecture in both the 3D Printer and CNC Milling Machine, demonstrating flexibility of the tools developed for this thesis.

Machine control is an old field that represents many years of collective effort, and I am proposing to replace some of its core assumptions. This is possible now largely due to the ever increasing ubiquity of computing: the processing power we can now buy for less than one dollar and integrate into a motor driver outstrips (by a few orders of magnitude) what was available at an institutional level when GCode was first developed. This new availability, alongside years of my colleagues’ work, leads to the handful of new contributions that I have been able to make.

All told, many of the components or key ideas in the systems in this thesis already exist piecewise in the state of the art, but they are seldom integrated into complete workflows. This too is largely due to GCode’s persistence: it often prevents researchers from taking their contribution in i.e. motor control, model building, or motion control and applying it in an end-to-end manner. The overrarching goal here is to help us imagine is possible if we can manage this systems integration task, to connect machines, their models, and their users (and designers!) together across all of their emergent behaviours.

References

In this thesis, I will take Digital Fabrication Equipment to mean CNC Machines, 3D Printers or other direct-write fabrication equipment (not including automation equipment more broadly).↩︎

That’s about 0.14 unfilled manufacturing jobs per capita for the USA, and 0.07 in Canada.↩︎

A “trap for young players” is a term of art for surprising or non-intuitive design patterns, issues, or strategies that are commonly known to initiates in the dark arts, but cause issues for newcomers. As coined by the venerable Dave Jones at the EEVblog youtube channel.↩︎

A GCode “program” is perhaps not actually a “program” since GCodes are (often) not turing-complete, i.e. they are more just a series of operations, rather than a proper computing language. We can fix this.↩︎

Instruction Set Architectures encode the lowest level operations that a given computing device can execute: add, compare, move, etc. x86 (originally developed by Intel) runs most PC’s and was basically the market dominant until ARM (developed in Cambridge, UK and licensed worldwide) arrived, which offers a simpler set of instructions and is favourable in low-power devices. As it turns out, simpler ISAs are preferred in modern designs because they are more deterministic and easier to predict: instructions complex ISAs like x86 can take more than one clock cycle to complete, for example, which makes compiler-level optimizations more difficult to articulate. The new trend is towards RISC-V (Reduced Instruction Set Computer, 5th Generation), which is open source and even simpler than ARM.↩︎

Mill/Turn machines are many-machines-in-one: they can operate as a lathe (“turn”) or a CNC mill, and they commonly mix modes. Often they have up to 12 axes, each of which can collide with others depending on configuration, tooling which is installed, and of course the work piece.↩︎

Where state-of-the-art machines do use sensing and feedback it is normally relegated to one subsystem: for example most industrial machines have encoders for positional feedback on each axis, and some high-precision equipment measures temperature along each axis - but these are used in the subsystem that positions that respective axis. In this thesis, I try to close a longer loop across the machines’ global control controller, using matched computational models of the whole system (process and motion).↩︎

We call the low-level computer codes that run on micrcontrollers ‘Firmware’ - the name reflects the difficulty in changing it, and its closeness to hardware. Firmwares typically run on small computing environments (which today means MHz and MBs), vs. Software which normally runs on i.e. a laptop, on top of an operating system. Firmwares are an important component in any hardware system, since they allow programmers to have tight control over their program’s timing. Operating systems and high-level language interpreters (on the other hand) sometimes interrupt program execution to switch threads or run garbage collection routines.↩︎